AI as a Second Extractor in HEOR Systematic Reviews: A Practical Framework

TL;DR

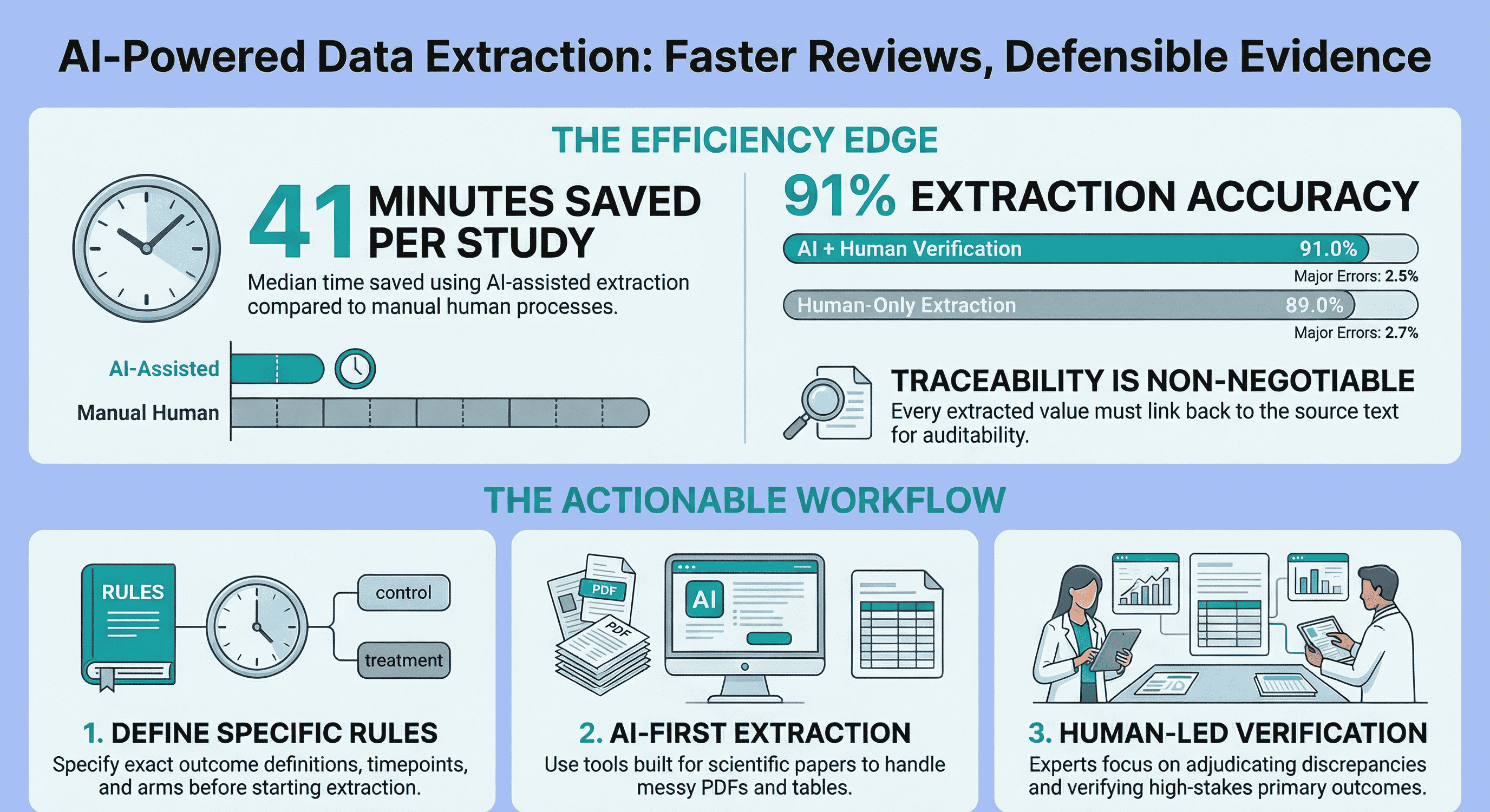

For HEOR and Market Access teams, the practical question is no longer "Can AI extract data?" The practical question is "Can AI safely replace the second extractor in parts of our workflow?"

In many workflows, the answer is yes, if three conditions are true:

- extraction fields are predefined and precise

- high-risk fields receive explicit human verification

- every critical value is traceable to source text/tables

Published evidence suggests AI-assisted extraction can save substantial time while maintaining comparable accuracy in well-controlled settings (PubMed: https://pubmed.ncbi.nlm.nih.gov/41183336/).

This article gives a practical framework to decide where AI should lead, where humans must lead, and how to keep extraction defendable for internal and payer-facing work.

HEOR evidence extraction fails less often because of bad tools and more often because of weak decision rules.

The usual pattern is familiar:

- outcome definitions vary across papers

- timepoints are not aligned before extraction starts

- comparator mapping gets messy in multi-arm studies

- teams spend more time reconciling than extracting

That is why this post does not focus on generic AI for PDF advice. It focuses on a specific operational decision for HEOR teams: when AI can safely take second-extractor duties, and when it cannot.

Why this topic matters specifically for HEOR and Market Access

HEOR extraction quality has downstream consequences:

- internal evidence reviews

- value narratives and reimbursement strategy

- HTA and payer-facing deliverables

- timelines that compress rapidly near decision points

So faster extraction alone is not the target. The target is faster extraction with defensibility.

That requires structured outputs, clear rules, and audit-ready traceability.

What "AI as second extractor" actually means

In practice, this is the model:

- A human team member defines extraction schema and decision rules.

- AI performs first-pass extraction into a structured table.

- A human verifier reviews critical fields and adjudicates conflicts.

- Outputs are exported with source traceability for QA and audit.

This is not fully automated evidence synthesis. It is workflow redesign where humans spend less time transcribing and more time deciding.

Decision framework: when AI should lead vs when humans should lead

Use this rule-of-thumb framework before launching extraction:

AI-leading zones (lower risk)

- clearly defined, standardized variables

- straightforward two-arm trial structures

- explicit outcome definitions and fixed timepoint rules

- directly reported statistics with unambiguous units

Human-leading zones (higher risk)

- multi-arm or adaptive/crossover designs

- ambiguous endpoint definitions across sections/tables

- subgroup-heavy reporting with inconsistent labels

- transformed/statistical fields (SD vs SE vs CI, change vs endpoint, ITT vs PP)

- partially missing or contradictory reporting

If most of your dataset is in the second list, AI can still help, but as a locator and drafting assistant rather than a primary extractor.

Common HEOR failure patterns (and control actions)

1) Arm/comparator mapping drift

Failure mode: values are extracted from the wrong dose group or subgroup. Control action: require explicit arm identifier columns and mandatory verifier sign-off for comparator-linked outcomes.

2) Timepoint substitution

Failure mode: nearest available timepoint is captured instead of predefined endpoint window. Control action: codify timepoint hierarchy before extraction (for example, week 12 primary, week 8 fallback only if week 12 is absent).

3) Statistical form confusion

Failure mode: correct number, wrong interpretation (mean change vs endpoint; SE entered as SD). Control action: include statistical-form fields and unit checks in the schema; force human review for transformed fields.

4) Silent missingness

Failure mode: absent data is replaced with plausible nearby values. Control action: require explicit missingness states ("not reported", "unclear", "not extractable") rather than blank cells.

A practical SOP for AI-assisted extraction in HEOR

Use the following process to reduce reconciliation load and keep outputs defendable.

Step 1: Lock schema and definitions first

Pilot on 3-5 studies. Refine variable definitions before scaling to full extraction.

Step 2: Define decision rules explicitly

At minimum:

- preferred analysis set (ITT vs PP)

- accepted timepoint windows

- handling of subgroup-only reporting

- denominator rules

- handling of conflicting numbers across text/tables/supplements

Step 3: Run AI first pass into structured output

Populate evidence-table fields, not prose summaries. Require value plus source snippet/location for critical fields.

Step 4: Verify by risk tier

- Tier A (always human-verified): primary outcomes, key comparators, effect sizes, adverse events

- Tier B (sample-verified): baseline descriptors, secondary outcomes with low decision impact

- Tier C (automation-tolerant): administrative metadata fields

Step 5: Reconcile and log adjudications

Capture why a value changed during verification. This creates a reusable ruleset for future projects.

How this compares with double human extraction

Double human extraction remains a strong standard in high-stakes contexts. But for many operational HEOR workflows, emerging evidence supports an AI-assisted model with human verification.

In one prospective study embedded in ongoing reviews:

- AI-assisted extraction: 91.0% accuracy

- human-only extraction: 89.0% accuracy

- major errors were similar

- median time savings: ~41 minutes per study Source: https://pubmed.ncbi.nlm.nih.gov/41183336/

Separate evaluations also suggest AI can perform strongly in second-reviewer roles, with confabulation/error risks that require verification controls:

- https://pubmed.ncbi.nlm.nih.gov/40661122/

- https://journals.plos.org/plosone/article?id=10.1371/journal.pone.0313401

The operational takeaway is straightforward: use AI to reduce duplication, not to remove methodological accountability.

Tooling criteria for HEOR teams

When selecting an extraction platform, prioritize:

- scientific-paper aware parsing (sections + tables, not generic chat)

- structured outputs aligned to evidence tables

- explicit source traceability for critical fields

- easy export into team workflows (Excel / Sheets)

- support for an AI-first plus human-verification operating model

If you want to run this workflow directly in a purpose-built environment, EvidenceTableBuilder.com is designed for scientific PDFs, evidence tables, and auditability.

Related reading

- The Most Requested Feature Is Finally Here: Audit Trails

- How Best to Use EvidenceTableBuilder for Systematic Literature Reviews

- Analysis-Driven Design of Evidence Tables

- Best Practices for Data Extraction in Systematic Reviews

- What Columns Should an Evidence Table for a Systematic Review Include?

References

-

Artificial Intelligence-Assisted Data Extraction With a Large Language Model: A Study Within Reviews (PubMed) https://pubmed.ncbi.nlm.nih.gov/41183336/

-

Using Artificial Intelligence Tools as Second Reviewers for Data Extraction in Systematic Reviews (PubMed) https://pubmed.ncbi.nlm.nih.gov/40661122/

-

ChatGPT-4o can serve as the second rater for data extraction in systematic reviews (PLOS ONE) https://journals.plos.org/plosone/article?id=10.1371/journal.pone.0313401

Tags:

About the Author

Connect on LinkedInGeorge Burchell

George Burchell is a specialist in systematic literature reviews and scientific evidence synthesis with significant expertise in integrating advanced AI technologies and automation tools into the research process. With over four years of consulting and practical experience, he has developed and led multiple projects focused on accelerating and refining the workflow for systematic reviews within medical and scientific research.